Compare commits

17 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

685d429c81 | ||

|

|

13c95fe094 | ||

|

|

82cf0e8e4a | ||

|

|

cddc2ff658 | ||

|

|

98add035f2 | ||

|

|

9ff1944d06 | ||

|

|

3d96c8ab9f | ||

|

|

f115f40a77 | ||

|

|

2b4e485eb0 | ||

|

|

01aeba2f7a | ||

|

|

3e65d21817 | ||

|

|

b7f191a9f5 | ||

|

|

e83bf0e1e4 | ||

|

|

f07aaffda0 | ||

|

|

20355e0c79 | ||

|

|

192f672f91 | ||

|

|

696e1a6741 |

128

CODE_OF_CONDUCT.md

Normal file

128

CODE_OF_CONDUCT.md

Normal file

@@ -0,0 +1,128 @@

|

||||

# Contributor Covenant Code of Conduct

|

||||

|

||||

## Our Pledge

|

||||

|

||||

We as members, contributors, and leaders pledge to make participation in our

|

||||

community a harassment-free experience for everyone, regardless of age, body

|

||||

size, visible or invisible disability, ethnicity, sex characteristics, gender

|

||||

identity and expression, level of experience, education, socio-economic status,

|

||||

nationality, personal appearance, race, religion, or sexual identity

|

||||

and orientation.

|

||||

|

||||

We pledge to act and interact in ways that contribute to an open, welcoming,

|

||||

diverse, inclusive, and healthy community.

|

||||

|

||||

## Our Standards

|

||||

|

||||

Examples of behavior that contributes to a positive environment for our

|

||||

community include:

|

||||

|

||||

* Demonstrating empathy and kindness toward other people

|

||||

* Being respectful of differing opinions, viewpoints, and experiences

|

||||

* Giving and gracefully accepting constructive feedback

|

||||

* Accepting responsibility and apologizing to those affected by our mistakes,

|

||||

and learning from the experience

|

||||

* Focusing on what is best not just for us as individuals, but for the

|

||||

overall community

|

||||

|

||||

Examples of unacceptable behavior include:

|

||||

|

||||

* The use of sexualized language or imagery, and sexual attention or

|

||||

advances of any kind

|

||||

* Trolling, insulting or derogatory comments, and personal or political attacks

|

||||

* Public or private harassment

|

||||

* Publishing others' private information, such as a physical or email

|

||||

address, without their explicit permission

|

||||

* Other conduct which could reasonably be considered inappropriate in a

|

||||

professional setting

|

||||

|

||||

## Enforcement Responsibilities

|

||||

|

||||

Community leaders are responsible for clarifying and enforcing our standards of

|

||||

acceptable behavior and will take appropriate and fair corrective action in

|

||||

response to any behavior that they deem inappropriate, threatening, offensive,

|

||||

or harmful.

|

||||

|

||||

Community leaders have the right and responsibility to remove, edit, or reject

|

||||

comments, commits, code, wiki edits, issues, and other contributions that are

|

||||

not aligned to this Code of Conduct, and will communicate reasons for moderation

|

||||

decisions when appropriate.

|

||||

|

||||

## Scope

|

||||

|

||||

This Code of Conduct applies within all community spaces, and also applies when

|

||||

an individual is officially representing the community in public spaces.

|

||||

Examples of representing our community include using an official e-mail address,

|

||||

posting via an official social media account, or acting as an appointed

|

||||

representative at an online or offline event.

|

||||

|

||||

## Enforcement

|

||||

|

||||

Instances of abusive, harassing, or otherwise unacceptable behavior may be

|

||||

reported to the community leaders responsible for enforcement at

|

||||

xintao.wang@outlook.com or xintaowang@tencent.com.

|

||||

All complaints will be reviewed and investigated promptly and fairly.

|

||||

|

||||

All community leaders are obligated to respect the privacy and security of the

|

||||

reporter of any incident.

|

||||

|

||||

## Enforcement Guidelines

|

||||

|

||||

Community leaders will follow these Community Impact Guidelines in determining

|

||||

the consequences for any action they deem in violation of this Code of Conduct:

|

||||

|

||||

### 1. Correction

|

||||

|

||||

**Community Impact**: Use of inappropriate language or other behavior deemed

|

||||

unprofessional or unwelcome in the community.

|

||||

|

||||

**Consequence**: A private, written warning from community leaders, providing

|

||||

clarity around the nature of the violation and an explanation of why the

|

||||

behavior was inappropriate. A public apology may be requested.

|

||||

|

||||

### 2. Warning

|

||||

|

||||

**Community Impact**: A violation through a single incident or series

|

||||

of actions.

|

||||

|

||||

**Consequence**: A warning with consequences for continued behavior. No

|

||||

interaction with the people involved, including unsolicited interaction with

|

||||

those enforcing the Code of Conduct, for a specified period of time. This

|

||||

includes avoiding interactions in community spaces as well as external channels

|

||||

like social media. Violating these terms may lead to a temporary or

|

||||

permanent ban.

|

||||

|

||||

### 3. Temporary Ban

|

||||

|

||||

**Community Impact**: A serious violation of community standards, including

|

||||

sustained inappropriate behavior.

|

||||

|

||||

**Consequence**: A temporary ban from any sort of interaction or public

|

||||

communication with the community for a specified period of time. No public or

|

||||

private interaction with the people involved, including unsolicited interaction

|

||||

with those enforcing the Code of Conduct, is allowed during this period.

|

||||

Violating these terms may lead to a permanent ban.

|

||||

|

||||

### 4. Permanent Ban

|

||||

|

||||

**Community Impact**: Demonstrating a pattern of violation of community

|

||||

standards, including sustained inappropriate behavior, harassment of an

|

||||

individual, or aggression toward or disparagement of classes of individuals.

|

||||

|

||||

**Consequence**: A permanent ban from any sort of public interaction within

|

||||

the community.

|

||||

|

||||

## Attribution

|

||||

|

||||

This Code of Conduct is adapted from the [Contributor Covenant][homepage],

|

||||

version 2.0, available at

|

||||

https://www.contributor-covenant.org/version/2/0/code_of_conduct.html.

|

||||

|

||||

Community Impact Guidelines were inspired by [Mozilla's code of conduct

|

||||

enforcement ladder](https://github.com/mozilla/diversity).

|

||||

|

||||

[homepage]: https://www.contributor-covenant.org

|

||||

|

||||

For answers to common questions about this code of conduct, see the FAQ at

|

||||

https://www.contributor-covenant.org/faq. Translations are available at

|

||||

https://www.contributor-covenant.org/translations.

|

||||

10

FAQ.md

10

FAQ.md

@@ -1,9 +1,7 @@

|

||||

# FAQ

|

||||

|

||||

1. **What is the difference of `--netscale` and `outscale`?**

|

||||

1. **How to select models?**<br>

|

||||

A: Please refer to [docs/model_zoo.md](docs/model_zoo.md)

|

||||

|

||||

A: TODO.

|

||||

|

||||

1. **How to select models?**

|

||||

|

||||

A: TODO.

|

||||

1. **Can `face_enhance` be used for anime images/animation videos?**<br>

|

||||

A: No, it can only be used for real faces. It is recommended not to use this option for anime images/animation videos to save GPU memory.

|

||||

|

||||

130

README.md

130

README.md

@@ -1,4 +1,8 @@

|

||||

# Real-ESRGAN

|

||||

<p align="center">

|

||||

<img src="assets/realesrgan_logo.png" height=120>

|

||||

</p>

|

||||

|

||||

## <div align="center"><b><a href="README.md">English</a> | <a href="README_CN.md">简体中文</a></b></div>

|

||||

|

||||

[](https://github.com/xinntao/Real-ESRGAN/releases)

|

||||

[](https://pypi.org/project/realesrgan/)

|

||||

@@ -8,31 +12,20 @@

|

||||

[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/pylint.yml)

|

||||

[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/publish-pip.yml)

|

||||

|

||||

[English](README.md) **|** [简体中文](README_CN.md)

|

||||

:fire: Update the **RealESRGAN AnimeVideo-v3** model **更新动漫视频的小模型**. Please see [anime video models](docs/anime_video_model.md) and [comparisons](docs/anime_comparisons.md) for more details.

|

||||

|

||||

1. [Colab Demo](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) for Real-ESRGAN <a href="https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="google colab logo"></a>.

|

||||

2. Portable [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-macos.zip) **executable files for Intel/AMD/Nvidia GPU**. You can find more information [here](#Portable-executable-files). The ncnn implementation is in [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

|

||||

2. [Colab Demo](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing) for Real-ESRGAN (**anime videos**) <a href="https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="google colab logo"></a>.

|

||||

3. Portable [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**. You can find more information [here](#Portable-executable-files). The ncnn implementation is in [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

|

||||

|

||||

Thanks for your interests and use:-) There are still many problems about the anime/illustration model, mainly including: 1. It cannot deal with videos; 2. It cannot be aware of depth/depth-of-field; 3. It is not adjustable; 4. May change the original style. Thanks for your valuable feedbacks/suggestions. All the feedbacks are updated in [feedback.md](feedback.md). Hopefully, a new model will be available soon.

|

||||

|

||||

感谢大家的关注和使用:-) 关于动漫插画的模型,目前还有很多问题,主要有: 1. 视频处理不了; 2. 景深虚化有问题; 3. 不可调节, 效果过了; 4. 改变原来的风格。大家提供了很好的反馈。这些反馈会逐步更新在 [这个文档](feedback.md)。希望不久之后,有新模型可以使用.

|

||||

|

||||

Real-ESRGAN aims at developing **Practical Algorithms for General Image Restoration**.<br>

|

||||

Real-ESRGAN aims at developing **Practical Algorithms for General Image/Video Restoration**.<br>

|

||||

We extend the powerful ESRGAN to a practical restoration application (namely, Real-ESRGAN), which is trained with pure synthetic data.

|

||||

|

||||

:art: Real-ESRGAN needs your contributions. Any contributions are welcome, such as new features/models/typo fixes/suggestions/maintenance, *etc*. See [CONTRIBUTING.md](CONTRIBUTING.md). All contributors are list [here](README.md#hugs-acknowledgement).

|

||||

|

||||

:question: Frequently Asked Questions can be found in [FAQ.md](FAQ.md) (Well, it is still empty there =-=||).

|

||||

:question: Frequently Asked Questions can be found in [FAQ.md](FAQ.md).

|

||||

|

||||

:triangular_flag_on_post: **Updates**

|

||||

- :white_check_mark: Add the ncnn implementation [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

|

||||

- :white_check_mark: Add [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth), which is optimized for **anime** images with much smaller model size. More details and comparisons with [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) are in [**anime_model.md**](docs/anime_model.md)

|

||||

- :white_check_mark: Support finetuning on your own data or paired data (*i.e.*, finetuning ESRGAN). See [here](Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

|

||||

- :white_check_mark: Integrate [GFPGAN](https://github.com/TencentARC/GFPGAN) to support **face enhancement**.

|

||||

- :white_check_mark: Integrated to [Huggingface Spaces](https://huggingface.co/spaces) with [Gradio](https://github.com/gradio-app/gradio). See [Gradio Web Demo](https://huggingface.co/spaces/akhaliq/Real-ESRGAN). Thanks [@AK391](https://github.com/AK391)

|

||||

- :white_check_mark: Support arbitrary scale with `--outscale` (It actually further resizes outputs with `LANCZOS4`). Add *RealESRGAN_x2plus.pth* model.

|

||||

- :white_check_mark: [The inference code](inference_realesrgan.py) supports: 1) **tile** options; 2) images with **alpha channel**; 3) **gray** images; 4) **16-bit** images.

|

||||

- :white_check_mark: The training codes have been released. A detailed guide can be found in [Training.md](Training.md).

|

||||

:milky_way: Thanks for your valuable feedbacks/suggestions. All the feedbacks are updated in [feedback.md](feedback.md).

|

||||

|

||||

---

|

||||

|

||||

@@ -45,6 +38,52 @@ Other recommended projects:<br>

|

||||

|

||||

---

|

||||

|

||||

<!---------------------------------- Updates --------------------------->

|

||||

<details>

|

||||

<summary>🚩<b>Updates</b></summary>

|

||||

|

||||

- ✅ Update the **RealESRGAN AnimeVideo-v3** model. Please see [anime video models](docs/anime_video_model.md) and [comparisons](docs/anime_comparisons.md) for more details.

|

||||

- ✅ Add small models for anime videos. More details are in [anime video models](docs/anime_video_model.md).

|

||||

- ✅ Add the ncnn implementation [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

|

||||

- ✅ Add [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth), which is optimized for **anime** images with much smaller model size. More details and comparisons with [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) are in [**anime_model.md**](docs/anime_model.md)

|

||||

- ✅ Support finetuning on your own data or paired data (*i.e.*, finetuning ESRGAN). See [here](Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

|

||||

- ✅ Integrate [GFPGAN](https://github.com/TencentARC/GFPGAN) to support **face enhancement**.

|

||||

- ✅ Integrated to [Huggingface Spaces](https://huggingface.co/spaces) with [Gradio](https://github.com/gradio-app/gradio). See [Gradio Web Demo](https://huggingface.co/spaces/akhaliq/Real-ESRGAN). Thanks [@AK391](https://github.com/AK391)

|

||||

- ✅ Support arbitrary scale with `--outscale` (It actually further resizes outputs with `LANCZOS4`). Add *RealESRGAN_x2plus.pth* model.

|

||||

- ✅ [The inference code](inference_realesrgan.py) supports: 1) **tile** options; 2) images with **alpha channel**; 3) **gray** images; 4) **16-bit** images.

|

||||

- ✅ The training codes have been released. A detailed guide can be found in [Training.md](Training.md).

|

||||

|

||||

</details>

|

||||

|

||||

<!---------------------------------- Projects that use Real-ESRGAN --------------------------->

|

||||

<details>

|

||||

<summary>🧩<b>Projects that use Real-ESRGAN</b></summary>

|

||||

|

||||

👋 If you develop/use Real-ESRGAN in your projects, welcome to let me know.

|

||||

|

||||

- NCNN-Android: [RealSR-NCNN-Android](https://github.com/tumuyan/RealSR-NCNN-Android) by [tumuyan](https://github.com/tumuyan)

|

||||

- VapourSynth: [vs-realesrgan](https://github.com/HolyWu/vs-realesrgan) by [HolyWu](https://github.com/HolyWu)

|

||||

- NCNN: [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

|

||||

|

||||

**GUI**

|

||||

|

||||

- [Waifu2x-Extension-GUI](https://github.com/AaronFeng753/Waifu2x-Extension-GUI) by [AaronFeng753](https://github.com/AaronFeng753)

|

||||

- [Squirrel-RIFE](https://github.com/Justin62628/Squirrel-RIFE) by [Justin62628](https://github.com/Justin62628)

|

||||

- [Real-GUI](https://github.com/scifx/Real-GUI) by [scifx](https://github.com/scifx)

|

||||

- [Real-ESRGAN_GUI](https://github.com/net2cn/Real-ESRGAN_GUI) by [net2cn](https://github.com/net2cn)

|

||||

- [Real-ESRGAN-EGUI](https://github.com/WGzeyu/Real-ESRGAN-EGUI) by [WGzeyu](https://github.com/WGzeyu)

|

||||

- [anime_upscaler](https://github.com/shangar21/anime_upscaler) by [shangar21](https://github.com/shangar21)

|

||||

|

||||

</details>

|

||||

|

||||

<!---------------------------------- Demo videos --------------------------->

|

||||

<details open>

|

||||

<summary>👀<b>Demo videos</b></summary>

|

||||

|

||||

- [大闹天宫片段](https://www.bilibili.com/video/BV1ja41117zb)

|

||||

|

||||

</details>

|

||||

|

||||

### :book: Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data

|

||||

|

||||

> [[Paper](https://arxiv.org/abs/2107.10833)]   [Project Page]   [[YouTube Video](https://www.youtube.com/watch?v=fxHWoDSSvSc)]   [[B站讲解](https://www.bilibili.com/video/BV1H34y1m7sS/)]   [[Poster](https://xinntao.github.io/projects/RealESRGAN_src/RealESRGAN_poster.pdf)]   [[PPT slides](https://docs.google.com/presentation/d/1QtW6Iy8rm8rGLsJ0Ldti6kP-7Qyzy6XL/edit?usp=sharing&ouid=109799856763657548160&rtpof=true&sd=true)]<br>

|

||||

@@ -80,21 +119,22 @@ If you have some images that Real-ESRGAN could not well restored, please also op

|

||||

|

||||

### Portable executable files

|

||||

|

||||

You can download [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.2/realesrgan-ncnn-vulkan-20210801-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.2/realesrgan-ncnn-vulkan-20210801-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.2/realesrgan-ncnn-vulkan-20210801-macos.zip) **executable files for Intel/AMD/Nvidia GPU**.

|

||||

You can download [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**.

|

||||

|

||||

This executable file is **portable** and includes all the binaries and models required. No CUDA or PyTorch environment is needed.<br>

|

||||

|

||||

You can simply run the following command (the Windows example, more information is in the README.md of each executable files):

|

||||

|

||||

```bash

|

||||

./realesrgan-ncnn-vulkan.exe -i input.jpg -o output.png

|

||||

./realesrgan-ncnn-vulkan.exe -i input.jpg -o output.png -n model_name

|

||||

```

|

||||

|

||||

We have provided three models:

|

||||

We have provided five models:

|

||||

|

||||

1. realesrgan-x4plus (default)

|

||||

2. realesrnet-x4plus

|

||||

3. realesrgan-x4plus-anime (optimized for anime images, small model size)

|

||||

4. realesr-animevideov3 (animation video)

|

||||

|

||||

You can use the `-n` argument for other models, for example, `./realesrgan-ncnn-vulkan.exe -i input.jpg -o output.png -n realesrnet-x4plus`

|

||||

|

||||

@@ -107,23 +147,21 @@ You can use the `-n` argument for other models, for example, `./realesrgan-ncnn-

|

||||

Usage: realesrgan-ncnn-vulkan.exe -i infile -o outfile [options]...

|

||||

|

||||

-h show this help

|

||||

-v verbose output

|

||||

-i input-path input image path (jpg/png/webp) or directory

|

||||

-o output-path output image path (jpg/png/webp) or directory

|

||||

-s scale upscale ratio (4, default=4)

|

||||

-s scale upscale ratio (can be 2, 3, 4. default=4)

|

||||

-t tile-size tile size (>=32/0=auto, default=0) can be 0,0,0 for multi-gpu

|

||||

-m model-path folder path to pre-trained models(default=models)

|

||||

-n model-name model name (default=realesrgan-x4plus, can be realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

|

||||

-g gpu-id gpu device to use (default=0) can be 0,1,2 for multi-gpu

|

||||

-m model-path folder path to the pre-trained models. default=models

|

||||

-n model-name model name (default=realesr-animevideov3, can be realesr-animevideov3 | realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

|

||||

-g gpu-id gpu device to use (default=auto) can be 0,1,2 for multi-gpu

|

||||

-j load:proc:save thread count for load/proc/save (default=1:2:2) can be 1:2,2,2:2 for multi-gpu

|

||||

-x enable tta mode

|

||||

-x enable tta mode"

|

||||

-f format output image format (jpg/png/webp, default=ext/png)

|

||||

-v verbose output

|

||||

```

|

||||

|

||||

Note that it may introduce block inconsistency (and also generate slightly different results from the PyTorch implementation), because this executable file first crops the input image into several tiles, and then processes them separately, finally stitches together.

|

||||

|

||||

This executable file is based on the wonderful [Tencent/ncnn](https://github.com/Tencent/ncnn) and [realsr-ncnn-vulkan](https://github.com/nihui/realsr-ncnn-vulkan) by [nihui](https://github.com/nihui).

|

||||

|

||||

---

|

||||

|

||||

## :wrench: Dependencies and Installation

|

||||

@@ -166,7 +204,7 @@ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_

|

||||

Inference!

|

||||

|

||||

```bash

|

||||

python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus.pth --input inputs --face_enhance

|

||||

python inference_realesrgan.py -n RealESRGAN_x4plus -i inputs --face_enhance

|

||||

```

|

||||

|

||||

Results are in the `results` folder

|

||||

@@ -184,7 +222,7 @@ Pre-trained models: [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real

|

||||

# download model

|

||||

wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P experiments/pretrained_models

|

||||

# inference

|

||||

python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus_anime_6B.pth --input inputs

|

||||

python inference_realesrgan.py -n RealESRGAN_x4plus_anime_6B -i inputs

|

||||

```

|

||||

|

||||

Results are in the `results` folder

|

||||

@@ -194,37 +232,25 @@ Results are in the `results` folder

|

||||

1. You can use X4 model for **arbitrary output size** with the argument `outscale`. The program will further perform cheap resize operation after the Real-ESRGAN output.

|

||||

|

||||

```console

|

||||

Usage: python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus.pth --input infile --output outfile [options]...

|

||||

Usage: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile -o outfile [options]...

|

||||

|

||||

A common command: python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus.pth --input infile --netscale 4 --outscale 3.5 --half --face_enhance

|

||||

A common command: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile --outscale 3.5 --face_enhance

|

||||

|

||||

-h show this help

|

||||

--input Input image or folder. Default: inputs

|

||||

--output Output folder. Default: results

|

||||

--model_path Path to the pre-trained model. Default: experiments/pretrained_models/RealESRGAN_x4plus.pth

|

||||

--netscale Upsample scale factor of the network. Default: 4

|

||||

--outscale The final upsampling scale of the image. Default: 4

|

||||

-i --input Input image or folder. Default: inputs

|

||||

-o --output Output folder. Default: results

|

||||

-n --model_name Model name. Default: RealESRGAN_x4plus

|

||||

-s, --outscale The final upsampling scale of the image. Default: 4

|

||||

--suffix Suffix of the restored image. Default: out

|

||||

--tile Tile size, 0 for no tile during testing. Default: 0

|

||||

-t, --tile Tile size, 0 for no tile during testing. Default: 0

|

||||

--face_enhance Whether to use GFPGAN to enhance face. Default: False

|

||||

--half Whether to use half precision during inference. Default: False

|

||||

--fp32 Use fp32 precision during inference. Default: fp16 (half precision).

|

||||

--ext Image extension. Options: auto | jpg | png, auto means using the same extension as inputs. Default: auto

|

||||

```

|

||||

|

||||

## :european_castle: Model Zoo

|

||||

|

||||

- [RealESRGAN_x4plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth): X4 model for general images

|

||||

- [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth): Optimized for anime images; 6 RRDB blocks (slightly smaller network)

|

||||

- [RealESRGAN_x2plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.1/RealESRGAN_x2plus.pth): X2 model for general images

|

||||

- [RealESRNet_x4plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/RealESRNet_x4plus.pth): X4 model with MSE loss (over-smooth effects)

|

||||

|

||||

- [official ESRGAN_x4](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/ESRGAN_SRx4_DF2KOST_official-ff704c30.pth): official ESRGAN model (X4)

|

||||

|

||||

The following models are **discriminators**, which are usually used for fine-tuning.

|

||||

|

||||

- [RealESRGAN_x4plus_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x4plus_netD.pth)

|

||||

- [RealESRGAN_x2plus_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x2plus_netD.pth)

|

||||

- [RealESRGAN_x4plus_anime_6B_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B_netD.pth)

|

||||

Please see [docs/model_zoo.md](docs/model_zoo.md)

|

||||

|

||||

## :computer: Training and Finetuning on your own dataset

|

||||

|

||||

|

||||

129

README_CN.md

129

README_CN.md

@@ -1,4 +1,8 @@

|

||||

# Real-ESRGAN

|

||||

<p align="center">

|

||||

<img src="assets/realesrgan_logo.png" height=120>

|

||||

</p>

|

||||

|

||||

## <div align="center"><b><a href="README.md">English</a> | <a href="README_CN.md">简体中文</a></b></div>

|

||||

|

||||

[](https://github.com/xinntao/Real-ESRGAN/releases)

|

||||

[](https://pypi.org/project/realesrgan/)

|

||||

@@ -8,29 +12,20 @@

|

||||

[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/pylint.yml)

|

||||

[](https://github.com/xinntao/Real-ESRGAN/blob/master/.github/workflows/publish-pip.yml)

|

||||

|

||||

[English](README.md) **|** [简体中文](README_CN.md)

|

||||

:fire: 更新动漫视频的小模型 **RealESRGAN AnimeVideo-v3**. 更多信息在 [anime video models](docs/anime_video_model.md) 和 [comparisons](docs/anime_comparisons.md)中.

|

||||

|

||||

1. Real-ESRGAN的[Colab Demo](https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing) <a href="https://colab.research.google.com/drive/1k2Zod6kSHEvraybHl50Lys0LerhyTMCo?usp=sharing"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="google colab logo"></a>.

|

||||

2. **支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-macos.zip),详情请移步[这里](#便携版(绿色版)可执行文件)。NCNN的实现在 [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)。

|

||||

2. Real-ESRGAN的 **动漫视频** 的[Colab Demo](https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing) <a href="https://colab.research.google.com/drive/1yNl9ORUxxlL4N0keJa2SEPB61imPQd1B?usp=sharing"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="google colab logo"></a>.

|

||||

3. **支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip),详情请移步[这里](#便携版(绿色版)可执行文件)。NCNN的实现在 [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)。

|

||||

|

||||

感谢大家的关注和使用:-) 关于动漫插画的模型,目前还有很多问题,主要有: 1. 视频处理不了; 2. 景深虚化有问题; 3. 不可调节, 效果过了; 4. 改变原来的风格。大家提供了很好的反馈。这些反馈会逐步更新在 [这个文档](feedback.md)。希望不久之后,有新模型可以使用.

|

||||

|

||||

Real-ESRGAN 的目标是开发出**实用的图像修复算法**。<br>

|

||||

Real-ESRGAN 的目标是开发出**实用的图像/视频修复算法**。<br>

|

||||

我们在 ESRGAN 的基础上使用纯合成的数据来进行训练,以使其能被应用于实际的图片修复的场景(顾名思义:Real-ESRGAN)。

|

||||

|

||||

:art: Real-ESRGAN 需要,也很欢迎你的贡献,如新功能、模型、bug修复、建议、维护等等。详情可以查看[CONTRIBUTING.md](CONTRIBUTING.md),所有的贡献者都会被列在[此处](README_CN.md#hugs-感谢)。

|

||||

|

||||

:question: 常见的问题可以在[FAQ.md](FAQ.md)中找到答案。(好吧,现在还是空白的=-=||)

|

||||

:milky_way: 感谢大家提供了很好的反馈。这些反馈会逐步更新在 [这个文档](feedback.md)。

|

||||

|

||||

:triangular_flag_on_post: **更新**

|

||||

- :white_check_mark: 添加了ncnn 实现:[Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

|

||||

- :white_check_mark: 添加了 [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth),对二次元图片进行了优化,并减少了model的大小。详情 以及 与[waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan)的对比请查看[**anime_model.md**](docs/anime_model.md)

|

||||

- :white_check_mark: 支持用户在自己的数据上进行微调 (finetune):[详情](Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

|

||||

- :white_check_mark: 支持使用[GFPGAN](https://github.com/TencentARC/GFPGAN)**增强人脸**

|

||||

- :white_check_mark: 通过[Gradio](https://github.com/gradio-app/gradio)添加到了[Huggingface Spaces](https://huggingface.co/spaces)(一个机器学习应用的在线平台):[Gradio在线版](https://huggingface.co/spaces/akhaliq/Real-ESRGAN)。感谢[@AK391](https://github.com/AK391)

|

||||

- :white_check_mark: 支持任意比例的缩放:`--outscale`(实际上使用`LANCZOS4`来更进一步调整输出图像的尺寸)。添加了*RealESRGAN_x2plus.pth*模型

|

||||

- :white_check_mark: [推断脚本](inference_realesrgan.py)支持: 1) 分块处理**tile**; 2) 带**alpha通道**的图像; 3) **灰色**图像; 4) **16-bit**图像.

|

||||

- :white_check_mark: 训练代码已经发布,具体做法可查看:[Training.md](Training.md)。

|

||||

:question: 常见的问题可以在[FAQ.md](FAQ.md)中找到答案。(好吧,现在还是空白的=-=||)

|

||||

|

||||

---

|

||||

|

||||

@@ -43,6 +38,51 @@ Real-ESRGAN 的目标是开发出**实用的图像修复算法**。<br>

|

||||

|

||||

---

|

||||

|

||||

<!---------------------------------- Updates --------------------------->

|

||||

<details>

|

||||

<summary>🚩<b>更新</b></summary>

|

||||

|

||||

- ✅ 更新动漫视频的小模型 **RealESRGAN AnimeVideo-v3**. 更多信息在 [anime video models](docs/anime_video_model.md) 和 [comparisons](docs/anime_comparisons.md)中.

|

||||

- ✅ 添加了针对动漫视频的小模型, 更多信息在 [anime video models](docs/anime_video_model.md) 中.

|

||||

- ✅ 添加了ncnn 实现:[Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan).

|

||||

- ✅ 添加了 [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth),对二次元图片进行了优化,并减少了model的大小。详情 以及 与[waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan)的对比请查看[**anime_model.md**](docs/anime_model.md)

|

||||

- ✅支持用户在自己的数据上进行微调 (finetune):[详情](Training.md#Finetune-Real-ESRGAN-on-your-own-dataset)

|

||||

- ✅ 支持使用[GFPGAN](https://github.com/TencentARC/GFPGAN)**增强人脸**

|

||||

- ✅ 通过[Gradio](https://github.com/gradio-app/gradio)添加到了[Huggingface Spaces](https://huggingface.co/spaces)(一个机器学习应用的在线平台):[Gradio在线版](https://huggingface.co/spaces/akhaliq/Real-ESRGAN)。感谢[@AK391](https://github.com/AK391)

|

||||

- ✅ 支持任意比例的缩放:`--outscale`(实际上使用`LANCZOS4`来更进一步调整输出图像的尺寸)。添加了*RealESRGAN_x2plus.pth*模型

|

||||

- ✅ [推断脚本](inference_realesrgan.py)支持: 1) 分块处理**tile**; 2) 带**alpha通道**的图像; 3) **灰色**图像; 4) **16-bit**图像.

|

||||

- ✅ 训练代码已经发布,具体做法可查看:[Training.md](Training.md)。

|

||||

|

||||

</details>

|

||||

|

||||

<!---------------------------------- Projects that use Real-ESRGAN --------------------------->

|

||||

<details>

|

||||

<summary>🧩<b>使用Real-ESRGAN的项目</b></summary>

|

||||

|

||||

👋 如果你开发/使用/集成了Real-ESRGAN, 欢迎联系我添加

|

||||

|

||||

- NCNN-Android: [RealSR-NCNN-Android](https://github.com/tumuyan/RealSR-NCNN-Android) by [tumuyan](https://github.com/tumuyan)

|

||||

- VapourSynth: [vs-realesrgan](https://github.com/HolyWu/vs-realesrgan) by [HolyWu](https://github.com/HolyWu)

|

||||

- NCNN: [Real-ESRGAN-ncnn-vulkan](https://github.com/xinntao/Real-ESRGAN-ncnn-vulkan)

|

||||

|

||||

**易用的图形界面**

|

||||

|

||||

- [Waifu2x-Extension-GUI](https://github.com/AaronFeng753/Waifu2x-Extension-GUI) by [AaronFeng753](https://github.com/AaronFeng753)

|

||||

- [Squirrel-RIFE](https://github.com/Justin62628/Squirrel-RIFE) by [Justin62628](https://github.com/Justin62628)

|

||||

- [Real-GUI](https://github.com/scifx/Real-GUI) by [scifx](https://github.com/scifx)

|

||||

- [Real-ESRGAN_GUI](https://github.com/net2cn/Real-ESRGAN_GUI) by [net2cn](https://github.com/net2cn)

|

||||

- [Real-ESRGAN-EGUI](https://github.com/WGzeyu/Real-ESRGAN-EGUI) by [WGzeyu](https://github.com/WGzeyu)

|

||||

- [anime_upscaler](https://github.com/shangar21/anime_upscaler) by [shangar21](https://github.com/shangar21)

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary>👀<b>Demo视频(B站)</b></summary>

|

||||

|

||||

- [大闹天宫片段](https://www.bilibili.com/video/BV1ja41117zb)

|

||||

|

||||

</details>

|

||||

|

||||

### :book: Real-ESRGAN: Training Real-World Blind Super-Resolution with Pure Synthetic Data

|

||||

|

||||

> [[论文](https://arxiv.org/abs/2107.10833)]   [项目主页]   [[YouTube 视频](https://www.youtube.com/watch?v=fxHWoDSSvSc)]   [[B站视频](https://www.bilibili.com/video/BV1H34y1m7sS/)]   [[Poster](https://xinntao.github.io/projects/RealESRGAN_src/RealESRGAN_poster.pdf)]   [[PPT](https://docs.google.com/presentation/d/1QtW6Iy8rm8rGLsJ0Ldti6kP-7Qyzy6XL/edit?usp=sharing&ouid=109799856763657548160&rtpof=true&sd=true)]<br>

|

||||

@@ -76,21 +116,22 @@ Real-ESRGAN 将会被长期支持,我会在空闲的时间中持续维护更

|

||||

|

||||

### 便携版(绿色版)可执行文件

|

||||

|

||||

你可以下载**支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-macos.zip)。

|

||||

你可以下载**支持Intel/AMD/Nvidia显卡**的绿色版exe文件: [Windows版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [macOS版](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip)。

|

||||

|

||||

绿色版指的是这些exe你可以直接运行(放U盘里拷走都没问题),因为里面已经有所需的文件和模型了。它不需要 CUDA 或者 PyTorch运行环境。<br>

|

||||

|

||||

你可以通过下面这个命令来运行(Windows版本的例子,更多信息请查看对应版本的README.md):

|

||||

|

||||

```bash

|

||||

./realesrgan-ncnn-vulkan.exe -i 输入图像.jpg -o 输出图像.png

|

||||

./realesrgan-ncnn-vulkan.exe -i 输入图像.jpg -o 输出图像.png -n 模型名字

|

||||

```

|

||||

|

||||

我们提供了三种模型:

|

||||

我们提供了五种模型:

|

||||

|

||||

1. realesrgan-x4plus(默认)

|

||||

2. reaesrnet-x4plus

|

||||

3. realesrgan-x4plus-anime(针对动漫插画图像优化,有更小的体积)

|

||||

4. realesr-animevideov3 (针对动漫视频)

|

||||

|

||||

你可以通过`-n`参数来使用其他模型,例如`./realesrgan-ncnn-vulkan.exe -i 二次元图片.jpg -o 二刺螈图片.png -n realesrgan-x4plus-anime`

|

||||

|

||||

@@ -103,23 +144,21 @@ Real-ESRGAN 将会被长期支持,我会在空闲的时间中持续维护更

|

||||

Usage: realesrgan-ncnn-vulkan.exe -i infile -o outfile [options]...

|

||||

|

||||

-h show this help

|

||||

-v verbose output

|

||||

-i input-path input image path (jpg/png/webp) or directory

|

||||

-o output-path output image path (jpg/png/webp) or directory

|

||||

-s scale upscale ratio (4, default=4)

|

||||

-s scale upscale ratio (can be 2, 3, 4. default=4)

|

||||

-t tile-size tile size (>=32/0=auto, default=0) can be 0,0,0 for multi-gpu

|

||||

-m model-path folder path to pre-trained models(default=models)

|

||||

-n model-name model name (default=realesrgan-x4plus, can be realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

|

||||

-g gpu-id gpu device to use (default=0) can be 0,1,2 for multi-gpu

|

||||

-m model-path folder path to the pre-trained models. default=models

|

||||

-n model-name model name (default=realesr-animevideov3, can be realesr-animevideov3 | realesrgan-x4plus | realesrgan-x4plus-anime | realesrnet-x4plus)

|

||||

-g gpu-id gpu device to use (default=auto) can be 0,1,2 for multi-gpu

|

||||

-j load:proc:save thread count for load/proc/save (default=1:2:2) can be 1:2,2,2:2 for multi-gpu

|

||||

-x enable tta mode

|

||||

-x enable tta mode"

|

||||

-f format output image format (jpg/png/webp, default=ext/png)

|

||||

-v verbose output

|

||||

```

|

||||

|

||||

由于这些exe文件会把图像分成几个板块,然后来分别进行处理,再合成导出,输出的图像可能会有一点割裂感(而且可能跟PyTorch的输出不太一样)

|

||||

|

||||

这些exe文件均基于[Tencent/ncnn](https://github.com/Tencent/ncnn)以及[nihui](https://github.com/nihui)的[realsr-ncnn-vulkan](https://github.com/nihui/realsr-ncnn-vulkan),感谢!

|

||||

|

||||

---

|

||||

|

||||

## :wrench: 依赖以及安装

|

||||

@@ -162,7 +201,7 @@ wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_

|

||||

推断!

|

||||

|

||||

```bash

|

||||

python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus.pth --input inputs --face_enhance

|

||||

python inference_realesrgan.py -n RealESRGAN_x4plus -i inputs --face_enhance

|

||||

```

|

||||

|

||||

结果在`results`文件夹

|

||||

@@ -180,47 +219,35 @@ python inference_realesrgan.py --model_path experiments/pretrained_models/RealES

|

||||

# 下载模型

|

||||

wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P experiments/pretrained_models

|

||||

# 推断

|

||||

python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus_anime_6B.pth --input inputs

|

||||

python inference_realesrgan.py -n RealESRGAN_x4plus_anime_6B -i inputs

|

||||

```

|

||||

|

||||

结果在`results`文件夹

|

||||

|

||||

### Python 脚本的用法

|

||||

|

||||

1. 虽然你实用了 X4 模型,但是你可以 **输出任意尺寸比例的图片**,只要实用了 `outscale` 参数. 程序会进一步对模型的输出图像进行缩放。

|

||||

1. 虽然你使用了 X4 模型,但是你可以 **输出任意尺寸比例的图片**,只要实用了 `outscale` 参数. 程序会进一步对模型的输出图像进行缩放。

|

||||

|

||||

```console

|

||||

Usage: python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus.pth --input infile --output outfile [options]...

|

||||

Usage: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile -o outfile [options]...

|

||||

|

||||

A common command: python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus.pth --input infile --netscale 4 --outscale 3.5 --half --face_enhance

|

||||

A common command: python inference_realesrgan.py -n RealESRGAN_x4plus -i infile --outscale 3.5 --face_enhance

|

||||

|

||||

-h show this help

|

||||

--input Input image or folder. Default: inputs

|

||||

--output Output folder. Default: results

|

||||

--model_path Path to the pre-trained model. Default: experiments/pretrained_models/RealESRGAN_x4plus.pth

|

||||

--netscale Upsample scale factor of the network. Default: 4

|

||||

--outscale The final upsampling scale of the image. Default: 4

|

||||

-i --input Input image or folder. Default: inputs

|

||||

-o --output Output folder. Default: results

|

||||

-n --model_name Model name. Default: RealESRGAN_x4plus

|

||||

-s, --outscale The final upsampling scale of the image. Default: 4

|

||||

--suffix Suffix of the restored image. Default: out

|

||||

--tile Tile size, 0 for no tile during testing. Default: 0

|

||||

-t, --tile Tile size, 0 for no tile during testing. Default: 0

|

||||

--face_enhance Whether to use GFPGAN to enhance face. Default: False

|

||||

--half Whether to use half precision during inference. Default: False

|

||||

--fp32 Whether to use half precision during inference. Default: False

|

||||

--ext Image extension. Options: auto | jpg | png, auto means using the same extension as inputs. Default: auto

|

||||

```

|

||||

|

||||

## :european_castle: 模型库

|

||||

|

||||

- [RealESRGAN_x4plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth): X4 model for general images

|

||||

- [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth): Optimized for anime images; 6 RRDB blocks (slightly smaller network)

|

||||

- [RealESRGAN_x2plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.1/RealESRGAN_x2plus.pth): X2 model for general images

|

||||

- [RealESRNet_x4plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/RealESRNet_x4plus.pth): X4 model with MSE loss (over-smooth effects)

|

||||

|

||||

- [official ESRGAN_x4](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/ESRGAN_SRx4_DF2KOST_official-ff704c30.pth): official ESRGAN model (X4)

|

||||

|

||||

下面是 **判别器** 模型, 他们经常被用来微调(fine-tune)模型.

|

||||

|

||||

- [RealESRGAN_x4plus_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x4plus_netD.pth)

|

||||

- [RealESRGAN_x2plus_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x2plus_netD.pth)

|

||||

- [RealESRGAN_x4plus_anime_6B_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B_netD.pth)

|

||||

请参见 [docs/model_zoo.md](docs/model_zoo.md)

|

||||

|

||||

## :computer: 训练,在你的数据上微调(Fine-tune)

|

||||

|

||||

|

||||

BIN

assets/realesrgan_logo.png

Normal file

BIN

assets/realesrgan_logo.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 83 KiB |

BIN

assets/realesrgan_logo_ai.png

Normal file

BIN

assets/realesrgan_logo_ai.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 81 KiB |

BIN

assets/realesrgan_logo_av.png

Normal file

BIN

assets/realesrgan_logo_av.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 81 KiB |

BIN

assets/realesrgan_logo_gi.png

Normal file

BIN

assets/realesrgan_logo_gi.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 81 KiB |

BIN

assets/realesrgan_logo_gv.png

Normal file

BIN

assets/realesrgan_logo_gv.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 81 KiB |

65

docs/anime_comparisons.md

Normal file

65

docs/anime_comparisons.md

Normal file

@@ -0,0 +1,65 @@

|

||||

# Comparisons among different anime models

|

||||

|

||||

## Update News

|

||||

|

||||

- 2022/04/24: Release **AnimeVideo-v3**. We have made the following improvements:

|

||||

- **better naturalness**

|

||||

- **Fewer artifacts**

|

||||

- **more faithful to the original colors**

|

||||

- **better texture restoration**

|

||||

- **better background restoration**

|

||||

|

||||

## Comparisons

|

||||

|

||||

We have compared our RealESRGAN-AnimeVideo-v3 with the following methods.

|

||||

Our RealESRGAN-AnimeVideo-v3 can achieve better results with faster inference speed.

|

||||

|

||||

- [waifu2x](https://github.com/nihui/waifu2x-ncnn-vulkan) with the hyperparameters: `tile=0`, `noiselevel=2`

|

||||

|

||||

- [Real-CUGAN](https://github.com/bilibili/ailab/tree/main/Real-CUGAN):

|

||||

we use the [20220227](https://github.com/bilibili/ailab/releases/tag/Real-CUGAN-add-faster-low-memory-mode) version, the hyperparameters are: `cache_mode=0`, `tile=0`, `alpha=1`.

|

||||

- our RealESRGAN-AnimeVideo-v3

|

||||

|

||||

## Results

|

||||

|

||||

You may need to **zoom in** for comparing details, or **click the image** to see in the full size.

|

||||

|

||||

**More natural results, better background restoration**

|

||||

| Input | waifu2x | Real-CUGAN | RealESRGAN<br>AnimeVideo-v3 |

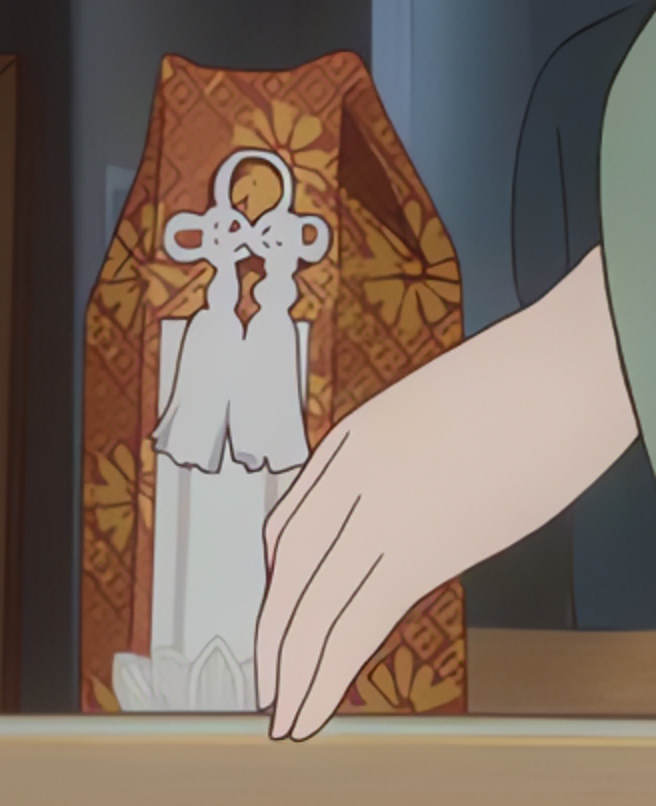

|

||||

| :---: | :---: | :---: | :---: |

|

||||

| |  |  |  |

|

||||

| |  |  |  |

|

||||

| |  |  |  |

|

||||

|

||||

**Fewer artifacts, better detailed textures**

|

||||

| Input | waifu2x | Real-CUGAN | RealESRGAN<br>AnimeVideo-v3 |

|

||||

| :---: | :---: | :---: | :---: |

|

||||

| |  |  |  |

|

||||

| |  |  |  |

|

||||

| |  |  |  |

|

||||

| |  |  |  |

|

||||

|

||||

**Other better results**

|

||||

| Input | waifu2x | Real-CUGAN | RealESRGAN<br>AnimeVideo-v3 |

|

||||

| :---: | :---: | :---: | :---: |

|

||||

| |  |  |  |

|

||||

| |  |  |  |

|

||||

|  |   |   |   |

|

||||

| |  |  |  |

|

||||

| |  |  |  |

|

||||

|

||||

## Inference Speed

|

||||

|

||||

### PyTorch

|

||||

|

||||

Note that we only report the **model** time, and ignore the IO time.

|

||||

|

||||

| GPU | Input Resolution | waifu2x | Real-CUGAN | RealESRGAN-AnimeVideo-v3

|

||||

| :---: | :---: | :---: | :---: | :---: |

|

||||

| V100 | 1921 x 1080 | - | 3.4 fps | **10.0** fps |

|

||||

| V100 | 1280 x 720 | - | 7.2 fps | **22.6** fps |

|

||||

| V100 | 640 x 480 | - | 24.4 fps | **65.9** fps |

|

||||

|

||||

### ncnn

|

||||

|

||||

- [ ] TODO

|

||||

@@ -1,12 +1,13 @@

|

||||

# Anime model

|

||||

# Anime Model

|

||||

|

||||

:white_check_mark: We add [*RealESRGAN_x4plus_anime_6B.pth*](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth), which is optimized for **anime** images with much smaller model size.

|

||||

|

||||

- [How to Use](#How-to-Use)

|

||||

- [PyTorch Inference](#PyTorch-Inference)

|

||||

- [ncnn Executable File](#ncnn-Executable-File)

|

||||

- [Comparisons with waifu2x](#Comparisons-with-waifu2x)

|

||||

- [Comparisons with Sliding Bars](#Comparions-with-Sliding-Bars)

|

||||

- [Anime Model](#anime-model)

|

||||

- [How to Use](#how-to-use)

|

||||

- [PyTorch Inference](#pytorch-inference)

|

||||

- [ncnn Executable File](#ncnn-executable-file)

|

||||

- [Comparisons with waifu2x](#comparisons-with-waifu2x)

|

||||

- [Comparisons with Sliding Bars](#comparisons-with-sliding-bars)

|

||||

|

||||

<p align="center">

|

||||

<img src="https://raw.githubusercontent.com/xinntao/public-figures/master/Real-ESRGAN/cmp_realesrgan_anime_1.png">

|

||||

@@ -14,7 +15,7 @@

|

||||

|

||||

The following is a video comparison with sliding bar. You may need to use the full-screen mode for better visual quality, as the original image is large; otherwise, you may encounter aliasing issue.

|

||||

|

||||

https://user-images.githubusercontent.com/17445847/131535127-613250d4-f754-4e20-9720-2f9608ad0675.mp4

|

||||

<https://user-images.githubusercontent.com/17445847/131535127-613250d4-f754-4e20-9720-2f9608ad0675.mp4>

|

||||

|

||||

## How to Use

|

||||

|

||||

@@ -26,12 +27,12 @@ Pre-trained models: [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real

|

||||

# download model

|

||||

wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth -P experiments/pretrained_models

|

||||

# inference

|

||||

python inference_realesrgan.py --model_path experiments/pretrained_models/RealESRGAN_x4plus_anime_6B.pth --input inputs

|

||||

python inference_realesrgan.py -n RealESRGAN_x4plus_anime_6B -i inputs

|

||||

```

|

||||

|

||||

### ncnn Executable File

|

||||

|

||||

Download the latest portable [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/realesrgan-ncnn-vulkan-20210901-macos.zip) **executable files for Intel/AMD/Nvidia GPU**.

|

||||

Download the latest portable [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**.

|

||||

|

||||

Taking the Windows as example, run:

|

||||

|

||||

@@ -63,6 +64,6 @@ We compare Real-ESRGAN-anime with [waifu2x](https://github.com/nihui/waifu2x-ncn

|

||||

|

||||

The following are video comparisons with sliding bar. You may need to use the full-screen mode for better visual quality, as the original image is large; otherwise, you may encounter aliasing issue.

|

||||

|

||||

https://user-images.githubusercontent.com/17445847/131536647-a2fbf896-b495-4a9f-b1dd-ca7bbc90101a.mp4

|

||||

<https://user-images.githubusercontent.com/17445847/131536647-a2fbf896-b495-4a9f-b1dd-ca7bbc90101a.mp4>

|

||||

|

||||

https://user-images.githubusercontent.com/17445847/131536742-6d9d82b6-9765-4296-a15f-18f9aeaa5465.mp4

|

||||

<https://user-images.githubusercontent.com/17445847/131536742-6d9d82b6-9765-4296-a15f-18f9aeaa5465.mp4>

|

||||

|

||||

123

docs/anime_video_model.md

Normal file

123

docs/anime_video_model.md

Normal file

@@ -0,0 +1,123 @@

|

||||

# Anime Video Models

|

||||

|

||||

:white_check_mark: We add small models that are optimized for anime videos :-)<br>

|

||||

More comparisons can be found in [anime_comparisons.md](docs/anime_comparisons.md)

|

||||

|

||||

- [How to Use](#how-to-use)

|

||||

- [PyTorch Inference](#pytorch-inference)

|

||||

- [ncnn Executable File](#ncnn-executable-file)

|

||||

- [Step 1: Use ffmpeg to extract frames from video](#step-1-use-ffmpeg-to-extract-frames-from-video)

|

||||

- [Step 2: Inference with Real-ESRGAN executable file](#step-2-inference-with-real-esrgan-executable-file)

|

||||

- [Step 3: Merge the enhanced frames back into a video](#step-3-merge-the-enhanced-frames-back-into-a-video)

|

||||

- [More Demos](#more-demos)

|

||||

|

||||

| Models | Scale | Description |

|

||||

| ---------------------------------------------------------------------------------------------------------------------------------- | :---- | :----------------------------- |

|

||||

| [realesr-animevideov3](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesr-animevideov3.pth) | X4 <sup>1</sup> | Anime video model with XS size |

|

||||

|

||||

Note: <br>

|

||||

<sup>1</sup> This model can also be used for X1, X2, X3.

|

||||

|

||||

---

|

||||

|

||||

The following are some demos (best view in the full screen mode).

|

||||

|

||||

<https://user-images.githubusercontent.com/17445847/145706977-98bc64a4-af27-481c-8abe-c475e15db7ff.MP4>

|

||||

|

||||

<https://user-images.githubusercontent.com/17445847/145707055-6a4b79cb-3d9d-477f-8610-c6be43797133.MP4>

|

||||

|

||||

<https://user-images.githubusercontent.com/17445847/145783523-f4553729-9f03-44a8-a7cc-782aadf67b50.MP4>

|

||||

|

||||

## How to Use

|

||||

|

||||

### PyTorch Inference

|

||||

|

||||

```bash

|

||||

# download model

|

||||

wget https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesr-animevideov3.pth -P realesrgan/weights

|

||||

# inference

|

||||

python inference_realesrgan_video.py -i inputs/video/onepiece_demo.mp4 -n realesr-animevideov3 -s 2 --suffix outx2

|

||||

```

|

||||

|

||||

### NCNN Executable File

|

||||

|

||||

#### Step 1: Use ffmpeg to extract frames from video

|

||||

|

||||

```bash

|

||||

ffmpeg -i onepiece_demo.mp4 -qscale:v 1 -qmin 1 -qmax 1 -vsync 0 tmp_frames/frame%08d.png

|

||||

```

|

||||

|

||||

- Remember to create the folder `tmp_frames` ahead

|

||||

|

||||

#### Step 2: Inference with Real-ESRGAN executable file

|

||||

|

||||

1. Download the latest portable [Windows](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-windows.zip) / [Linux](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-ubuntu.zip) / [MacOS](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesrgan-ncnn-vulkan-20220424-macos.zip) **executable files for Intel/AMD/Nvidia GPU**

|

||||

|

||||

1. Taking the Windows as example, run:

|

||||

|

||||

```bash

|

||||

./realesrgan-ncnn-vulkan.exe -i tmp_frames -o out_frames -n realesr-animevideov3 -s 2 -f jpg

|

||||

```

|

||||

|

||||

- Remember to create the folder `out_frames` ahead

|

||||

|

||||

#### Step 3: Merge the enhanced frames back into a video

|

||||

|

||||

1. First obtain fps from input videos by

|

||||

|

||||

```bash

|

||||

ffmpeg -i onepiece_demo.mp4

|

||||

```

|

||||

|

||||

```console

|

||||

Usage:

|

||||

-i input video path

|

||||

```

|

||||

|

||||

You will get the output similar to the following screenshot.

|

||||

|

||||

<p align="center">

|

||||

<img src="https://user-images.githubusercontent.com/17445847/145710145-c4f3accf-b82f-4307-9f20-3803a2c73f57.png">

|

||||

</p>

|

||||

|

||||

2. Merge frames

|

||||

|

||||

```bash

|

||||

ffmpeg -r 23.98 -i out_frames/frame%08d.jpg -c:v libx264 -r 23.98 -pix_fmt yuv420p output.mp4

|

||||

```

|

||||

|

||||

```console

|

||||

Usage:

|

||||

-i input video path

|

||||

-c:v video encoder (usually we use libx264)

|

||||

-r fps, remember to modify it to meet your needs

|

||||

-pix_fmt pixel format in video

|

||||

```

|

||||

|

||||

If you also want to copy audio from the input videos, run:

|

||||

|

||||

```bash

|

||||

ffmpeg -r 23.98 -i out_frames/frame%08d.jpg -i onepiece_demo.mp4 -map 0:v:0 -map 1:a:0 -c:a copy -c:v libx264 -r 23.98 -pix_fmt yuv420p output_w_audio.mp4

|

||||

```

|

||||

|

||||

```console

|

||||

Usage:

|

||||

-i input video path, here we use two input streams

|

||||

-c:v video encoder (usually we use libx264)

|

||||

-r fps, remember to modify it to meet your needs

|

||||

-pix_fmt pixel format in video

|

||||

```

|

||||

|

||||

## More Demos

|

||||

|

||||

- Input video for One Piece:

|

||||

|

||||

<https://user-images.githubusercontent.com/17445847/145706822-0e83d9c4-78ef-40ee-b2a4-d8b8c3692d17.mp4>

|

||||

|

||||

- Out video for One Piece

|

||||

|

||||

<https://user-images.githubusercontent.com/17445847/164960481-759658cf-fcb8-480c-b888-cecb606e8744.mp4>

|

||||

|

||||

**More comparisons**

|

||||

|

||||

<https://user-images.githubusercontent.com/17445847/145707458-04a5e9b9-2edd-4d1f-b400-380a72e5f5e6.MP4>

|

||||

48

docs/model_zoo.md

Normal file

48

docs/model_zoo.md

Normal file

@@ -0,0 +1,48 @@

|

||||

# :european_castle: Model Zoo

|

||||

|

||||

- [For General Images](#for-general-images)

|

||||

- [For Anime Images](#for-anime-images)

|

||||

- [For Anime Videos](#for-anime-videos)

|

||||

|

||||

---

|

||||

|

||||

## For General Images

|

||||

|

||||

| Models | Scale | Description |

|

||||

| ------------------------------------------------------------------------------------------------------------------------------- | :---- | :------------------------------------------- |

|

||||

| [RealESRGAN_x4plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth) | X4 | X4 model for general images |

|

||||

| [RealESRGAN_x2plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.1/RealESRGAN_x2plus.pth) | X2 | X2 model for general images |

|

||||

| [RealESRNet_x4plus](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/RealESRNet_x4plus.pth) | X4 | X4 model with MSE loss (over-smooth effects) |

|

||||

| [official ESRGAN_x4](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.1/ESRGAN_SRx4_DF2KOST_official-ff704c30.pth) | X4 | official ESRGAN model |

|

||||

|

||||

The following models are **discriminators**, which are usually used for fine-tuning.

|

||||

|

||||

| Models | Corresponding model |

|

||||

| ---------------------------------------------------------------------------------------------------------------------- | :------------------ |

|

||||

| [RealESRGAN_x4plus_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x4plus_netD.pth) | RealESRGAN_x4plus |

|

||||

| [RealESRGAN_x2plus_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.3/RealESRGAN_x2plus_netD.pth) | RealESRGAN_x2plus |

|

||||

|

||||

## For Anime Images / Illustrations

|

||||

|

||||

| Models | Scale | Description |

|

||||

| ------------------------------------------------------------------------------------------------------------------------------ | :---- | :---------------------------------------------------------- |

|

||||

| [RealESRGAN_x4plus_anime_6B](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth) | X4 | Optimized for anime images; 6 RRDB blocks (smaller network) |

|

||||

|

||||

The following models are **discriminators**, which are usually used for fine-tuning.

|

||||

|

||||

| Models | Corresponding model |

|

||||

| ---------------------------------------------------------------------------------------------------------------------------------------- | :------------------------- |

|

||||

| [RealESRGAN_x4plus_anime_6B_netD](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B_netD.pth) | RealESRGAN_x4plus_anime_6B |

|

||||

|

||||

## For Animation Videos

|

||||

|

||||

| Models | Scale | Description |

|

||||

| ---------------------------------------------------------------------------------------------------------------------------------- | :---- | :----------------------------- |

|

||||

| [realesr-animevideov3](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.5.0/realesr-animevideov3.pth) | X4<sup>1</sup> | Anime video model with XS size |

|

||||

|

||||

Note: <br>

|

||||

<sup>1</sup> This model can also be used for X1, X2, X3.

|

||||

|

||||

The following models are **discriminators**, which are usually used for fine-tuning.

|

||||

|

||||

TODO

|

||||

@@ -5,28 +5,30 @@ import os

|

||||

from basicsr.archs.rrdbnet_arch import RRDBNet

|

||||

|

||||

from realesrgan import RealESRGANer

|

||||

from realesrgan.archs.srvgg_arch import SRVGGNetCompact

|

||||

|

||||

|

||||

def main():

|

||||

"""Inference demo for Real-ESRGAN.

|

||||

"""

|

||||

parser = argparse.ArgumentParser()

|

||||

parser.add_argument('--input', type=str, default='inputs', help='Input image or folder')

|

||||

parser.add_argument('-i', '--input', type=str, default='inputs', help='Input image or folder')

|

||||

parser.add_argument(

|

||||

'--model_path',

|

||||

'-n',

|

||||

'--model_name',

|

||||

type=str,

|

||||

default='experiments/pretrained_models/RealESRGAN_x4plus.pth',

|

||||

help='Path to the pre-trained model')

|

||||

parser.add_argument('--output', type=str, default='results', help='Output folder')

|

||||

parser.add_argument('--netscale', type=int, default=4, help='Upsample scale factor of the network')

|

||||

parser.add_argument('--outscale', type=float, default=4, help='The final upsampling scale of the image')

|

||||

default='RealESRGAN_x4plus',

|

||||

help=('Model names: RealESRGAN_x4plus | RealESRNet_x4plus | RealESRGAN_x4plus_anime_6B | RealESRGAN_x2plus | '

|

||||

'realesr-animevideov3'))

|

||||

parser.add_argument('-o', '--output', type=str, default='results', help='Output folder')

|

||||

parser.add_argument('-s', '--outscale', type=float, default=4, help='The final upsampling scale of the image')

|

||||

parser.add_argument('--suffix', type=str, default='out', help='Suffix of the restored image')

|

||||

parser.add_argument('--tile', type=int, default=0, help='Tile size, 0 for no tile during testing')

|

||||

parser.add_argument('-t', '--tile', type=int, default=0, help='Tile size, 0 for no tile during testing')

|

||||

parser.add_argument('--tile_pad', type=int, default=10, help='Tile padding')

|

||||

parser.add_argument('--pre_pad', type=int, default=0, help='Pre padding size at each border')

|

||||

parser.add_argument('--face_enhance', action='store_true', help='Use GFPGAN to enhance face')

|

||||

parser.add_argument('--half', action='store_true', help='Use half precision during inference')

|

||||

parser.add_argument('--block', type=int, default=23, help='num_block in RRDB')

|

||||

parser.add_argument(

|

||||

'--fp32', action='store_true', help='Use fp32 precision during inference. Default: fp16 (half precision).')

|

||||

parser.add_argument(

|

||||

'--alpha_upsampler',

|

||||

type=str,

|

||||

@@ -39,26 +41,42 @@ def main():

|

||||

help='Image extension. Options: auto | jpg | png, auto means using the same extension as inputs')

|

||||

args = parser.parse_args()

|

||||

|

||||

if 'RealESRGAN_x4plus_anime_6B.pth' in args.model_path:

|

||||

args.block = 6

|

||||

elif 'RealESRGAN_x2plus.pth' in args.model_path:

|

||||

args.netscale = 2

|

||||

# determine models according to model names

|

||||

args.model_name = args.model_name.split('.')[0]

|

||||

if args.model_name in ['RealESRGAN_x4plus', 'RealESRNet_x4plus']: # x4 RRDBNet model

|

||||

model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=23, num_grow_ch=32, scale=4)

|

||||

netscale = 4

|

||||

elif args.model_name in ['RealESRGAN_x4plus_anime_6B']: # x4 RRDBNet model with 6 blocks

|

||||

model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=6, num_grow_ch=32, scale=4)

|

||||

netscale = 4

|

||||

elif args.model_name in ['RealESRGAN_x2plus']: # x2 RRDBNet model

|

||||

model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=23, num_grow_ch=32, scale=2)

|

||||

netscale = 2

|

||||

elif args.model_name in ['realesr-animevideov3']: # x4 VGG-style model (XS size)

|

||||

model = SRVGGNetCompact(num_in_ch=3, num_out_ch=3, num_feat=64, num_conv=16, upscale=4, act_type='prelu')

|

||||

netscale = 4

|

||||

|

||||

model = RRDBNet(num_in_ch=3, num_out_ch=3, num_feat=64, num_block=args.block, num_grow_ch=32, scale=args.netscale)

|

||||

# determine model paths

|

||||

model_path = os.path.join('experiments/pretrained_models', args.model_name + '.pth')

|

||||

if not os.path.isfile(model_path):

|

||||

model_path = os.path.join('realesrgan/weights', args.model_name + '.pth')

|

||||

if not os.path.isfile(model_path):

|

||||

raise ValueError(f'Model {args.model_name} does not exist.')

|

||||

|

||||

# restorer

|

||||

upsampler = RealESRGANer(

|

||||

scale=args.netscale,

|

||||

model_path=args.model_path,

|

||||

scale=netscale,

|

||||

model_path=model_path,

|

||||

model=model,

|

||||

tile=args.tile,

|

||||

tile_pad=args.tile_pad,

|

||||

pre_pad=args.pre_pad,

|

||||

half=args.half)

|

||||

half=not args.fp32)